I know how awkward that title is and I apologize.

OS: Home Assistant 11.2

Core: 2023.12.3

Computer: Raspberry Pi 4 Model B Rev 1.5

Explanation: I run a set of data collection scripts on my home network and one of the pieces of data is getting the computer model. In all my other SBCs, the below symlink gets that data.

Symlink: /proc/device-tree/model

File Location: /sys/firmware/devicetree/base/model

The symlink is broken and when I went to check the firmware directory, it is completely empty. The last update date for /sys/firmware according to ls -la is December 10 at 2:40 which when I checked my backups, is when core_2023.12.0 installed.

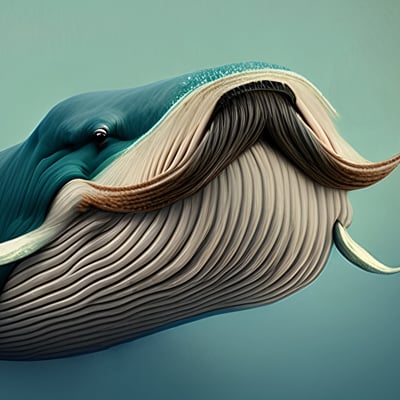

Attached is what should be in the firmware folder on my other Raspberry Pi 4 Model B Rev 1.5 right now.

I did a find from root for either the model file or anything vaguely resembling it and I can’t find it. Anyone else have this problem or is it just happening to me? Or am I missing something?

Every time I read of issues like this, I so much wish ha devs could be bothered to complement the rolling release as it is today with a quarterly “stable” branch that gets all the bug fixes and patches but none of the monthly new features.

The stable branch could lag 3 months in features, it doesn’t matter with latest features when all you want is a recently patched and updated system that runs your house without going bzzpth.

Since probably October, I’ve noticed some really really random problems show up that never used to. And for once, I know it wasn’t me messing with the code; I took a sabbatical from HA to learn how to use Proxmox a couple of months ago. and everything worked fine. It was actually a clean install to a new Raspberry Pi as my Odroid decided to stop working and I haven’t had time to learn to solder (hopefully this week, tho). I was kind of wondering if it was the Pi that was the problem.

I always submit this idea any time there’s a “Month of What The Heck”. Devs don’t care much for it. Honestly at this point I’d be happy to give up all new features and just stay on a stable branch.

Yeah, it’s totally a fun feature driven project reliant on community efforts despite there is a commercial venture behind it nowadays. The core devs still treat it as their baby hobby project and nobody wants to do the boring job of maintaining a stable branch so it’s not going to happen.

Some while ago I saw another discussion on this topic that was shot down with the opposing arguments that all users have to do is stay up date with the latest version, while also saying that users are at fault for things breaking because they update when a notification tells them that there is a new latest version.

I think it’s arrogant and irresponsible and a ticking time bomb for a big time bug or zero day exploit and not how any serious project should be administered.

I wish I could have Linus Torvalds give his colorful opinion on this mindset on developing the operating system for peoples homes.

I think you are more than welcome to make a Fork and do this. You can backport all fixes and not implement new features.

I think new features is one of the core reasons the projects gets more contributors, and it sorely needs those. I understand why the focus is now on that.

If you want stability, I’d suggest maybe finding some likely minded people and go and maintain a Long Term Stable version.

Indeed I could, but this is the boring job you have the paid employees for rather than putting it on users to ensure a stable version of your product. .

I would definitely post this directly on the hass forums if you haven’t already

I know, I’m trying to write up a clear bug report on this, but I’m honestly not sure if it actually has any effect other than messing up my data collection scripts. Yeah, it’s annoying the hell out of me but I’ve been going through the documented issues with the core and it doesn’t look like anyone else noticed a problem. I’ve been trying to figure out if it’s created by an alpine package that I can run, but not much luck there.

Note: I enabled root for Home Assistant OS and the symlink and file are fine there.

deleted by creator

Which is why I"m not sure I need a bug report. The part I have non-root access to is inside a docker container and that’s all I needed to collect data. But it’s such a random thing to go missing.since that core update.

deleted by creator

Full disclosure: I just–and I mean just–got my head wrapped around docker and containers due to installing Proxmox on my server. Right now, my Proxmox server runs a LXC container for docker, and in docker I run Handbrake and MakeMKV images that run the GUIs in a browser or run with command line. They connect to each other through mounting the LXC’s /home/user into both., then added a connection to the remote shares on my other server so I can send them to my media server. Yes, I did have to map all the mountings out first before I started but hey, that’s how I learn.

Long way of saying: I am just now able to start understanding how Home Assistant works–someone said Home Assistant OS was basically really a hypervisor overseeing a lot of containers and now that I use Proxmox, that really helped–but I’m still really unfamiliar with the details.

I installed the full Home Assistant on a dedicated Pi4, so it’s the only thing it does. Until yesterday, the only part I actually interacted with was the data portion, which is where all my files are, where I configure my GUI and script, store addons, etc. The container for this portion runs on Alpine Linux; I can and have and do install/update/change/build packages I need or like to use. in there It’s ephemeral; anything I do outside the data directory (it holds /config, /addons, etc) gets wiped clean on update, so I reinstall them whenever HA does an update .

When I run my data collection scripts on my Home Assistant SBC, they take their information from the container aka Alpine Linux., including saying my OS was Alpine. All of this worked correctly up until–according to the directory dates, December 10th at 2:40 AM when the /sys/firmware was last updated and everything in it vanished, breaking the symlink to /proc/device-tree/model. This also updated the container OS to Alpine 3.19.0. Data collection runs hourly; one of my Pis ssh’s into each computer to run four data collection scripts and updates a browser page I run off apache, so I can check current presence and network status and also check the OS/hardware/running services of all my computers from the browser (the services script doesn’t work on Alpine yet; different structure). I didn’t notice until recently because work got super busy, so I only verified availability and network status regularly.

These are the packages I install or switch to an updated/different version the Alpine container to help with this or just have fun: -figlet (it’s just cute ASCII art for an ssh banner), -iproute2 (network info, when updated has option to store network info in a variable as a json),

- iw (wireless adapter info),

- jq (reads and processes json files),

- procps-ng (updated uptime package for more options),

- sed (updated can do more than the installed one),

- util-linux (for column command in bash),

- wireless-tools (iwconfig, more wireless data if iw doesn’t have it) (Note: I think tr may also be updated by one of these.)

These are the ones I use for data collection that are already installed:

- lscpu (“Model name” “Vendor ID” “Architecture” “CPU(s)” “CPU min MHz” “CPU max MHz”)

- uname (kernel)

These are the files I access for data collection:

- /proc/device-tree/model (Computer model)

- /proc/meminfo (RAM)

- /proc/uptime (Uptime)

- /etc/os-release (Current OS data)

- /sys/class/thermal/thermal_zone0/temp (CPU temperature for all my SBCs except BeagleBone Black)

Until this month, all of those files were accessible both before I do the package updates and after. The only one affected was maybe /proc/uptime by the uptime update to get more options. Again: I’ve been running these scripts or versions of them for well over a year and I test individually on each SBC before adding them to my data collection scripts to run remotely; all of these worked on every computer, including whatever SBC was running Home Assistant. (Odroid N2+ until it died a few months ago) And all of them work right now–except /proc/device-tree/model on my Home Assistant SBC. The only way I can get model info is to add an extra ssh to Home Assistant itself as root and grab the data off that file (and while I"m there, get the OS data for Home Assistant instead of Alpine), save it to my shell script directory in my data container, and have the my script process that file for my data after it gets the rest from the container.

That’s why I’m weirded out; this is one of the things that is the same on every single Linux OS I’ve used and on Alpine, so why on earth would this one thing change?

This could conceivably be an Alpine issue; I downloaded Alpine 3.19.0 to run in Proxmox when I get a chance, and I kind of hope that it’s a deliberate change in Alpine, because otherwise, I can’t imagine why on earth the HA team would alter Alpine to break that symlink. Or they could be templating Alpine for the container each time and this time it accidentally broke. The entire thing is just so weird. Or maybe–though not likely–a bug in Alpine 3.19.0, but I doubt it; I can’t possibly be the first to notice, it was released at least three weeks ago and I googled a lot.

I’m honestly not sure it affects anything at all, but it bothers me so here we are. Though granted, it did make me finally get off my ass and figure out how to login as root into HA as well as do a badly needed refactor of my main data collection script (the one that does the ssh’ing) as well as clean and refactor my computer information scripts, so maybe it was destiny.

deleted by creator

You know, I didn’t think of that. I’ve never run an OS in docker; all I tested my data collection scripts on were my regular VM’s a few times just for fun. And for that matter, most LXC containers I run in Proxmox are privileged to get around restrictions (still haven’t found a way for LXC’s to let me compile different architectures, though. HA may have updated their docker to current, which would explain why it happened so suddenly.

And yes, for now, I’ll just do root login to grab the information; it’s technically more accurate, I am just knee-jerk distrustful of using root to the point until Proxmox and this last year, I almost forgot it existed unless there’s a very weird linux problem I need it for. Thanks for this information, though; I’ve only just started seriously working with LXC and docker containers, so that’s not an approach I woudl have considered.